You asked AI to "build a login page" and it was done in 5 minutes. But that code had a SQL injection vulnerability hiding in it. Password hashing was missing too. Vibe coding made MIT’s list of 10 Breakthrough Technologies for 2026, but the irony of code built fast getting breached fast is becoming reality.

What Is This?

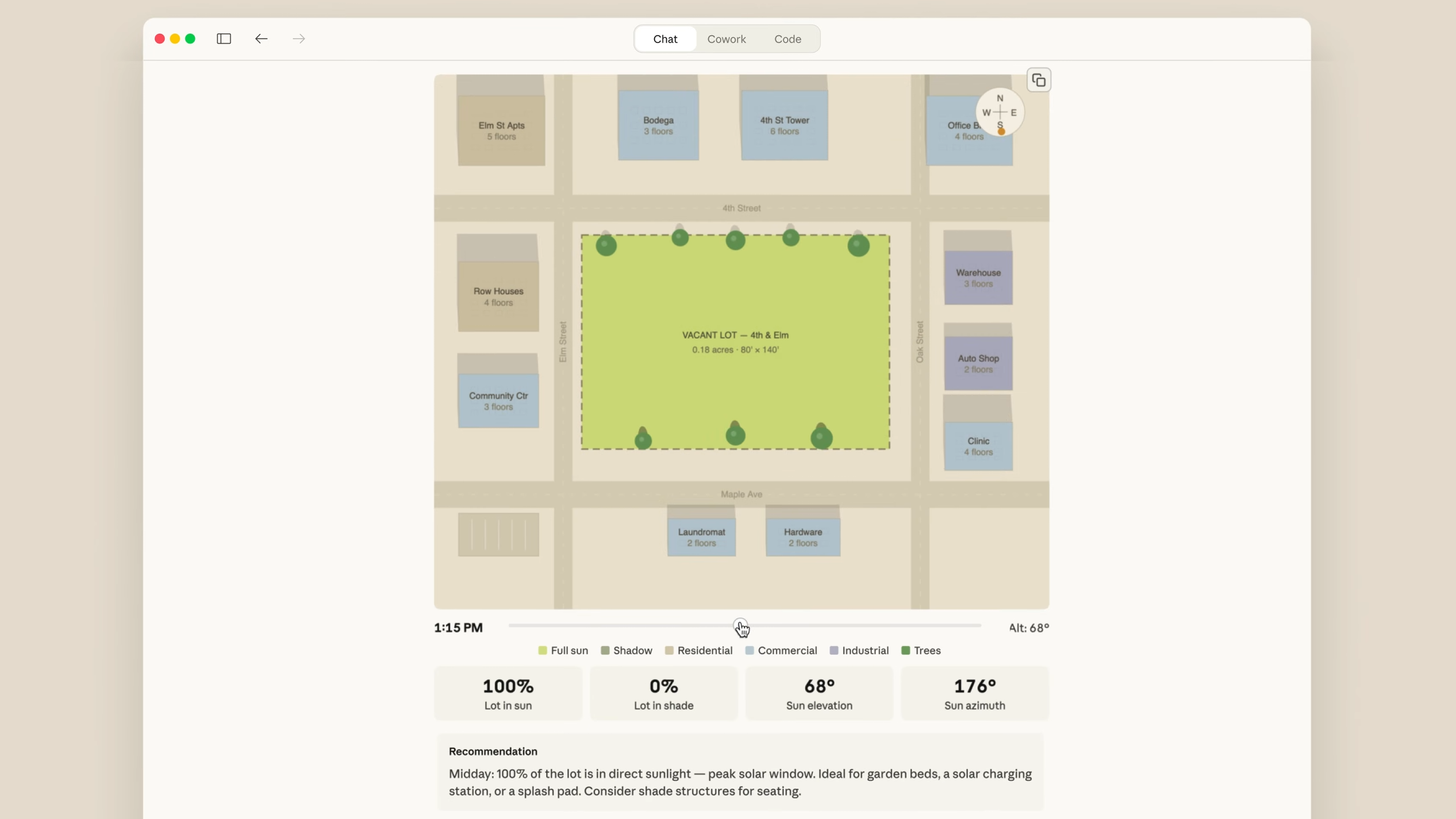

In February 2025, Andrej Karpathy — former OpenAI co-founder and Tesla AI lead — posted on X: "I’m calling this vibe coding. You fully give in to the vibes, embrace exponentials, and forget that the code even exists." You give instructions to AI in natural language, accept the generated code without review, and when errors pop up, paste the error message right back to AI.

The term spread like wildfire. Merriam-Webster listed it as a "slang & trending" expression in March 2025, and MIT Technology Review included it in their 10 Breakthrough Technologies list for 2026 under the name "Generative Coding." They also noted that Meta, Google, and Microsoft are generating 25–30% of their internal new code with AI.

The numbers look rosy on the surface. 74% of developers report feeling more productive, commit frequency is up 1.4–1.9x, and greenfield feature completion time has dropped 20–45%. In startups (20 employees or fewer), over 60% are using vibe coding on a daily basis.

The problem is what lurks behind that speed.

What Changes?

Built fast, breached fast

In December 2025, CodeRabbit’s analysis of 470 open-source GitHub PRs delivered shocking results. Code co-authored with AI contained an average of 1.7x more issues than human-written code. Issues per PR: humans 6.45 vs AI 10.83. And security vulnerabilities were even worse.

| Human-Written Code | AI-Generated Code (Risk Level) | |

|---|---|---|

| XSS Vulnerabilities | Baseline (1x) | 2.74x higher |

| Insecure Object References | Baseline (1x) | 1.91x higher |

| Password Handling Errors | Baseline (1x) | 1.88x higher |

| Insecure Deserialization | Baseline (1x) | 1.82x higher |

| Logic/Correctness Errors | Baseline (1x) | 1.75x higher |

A security startup called Tenzai took it a step further. They built 3 identical apps each with 5 major vibe coding tools — Claude Code, OpenAI Codex, Cursor, Replit, and Devin — for a total of 15 apps, and found 69 vulnerabilities. About 6 of those were critical-severity. Every tool produced SSRF (Server-Side Request Forgery) vulnerabilities, and not a single app set up CSRF protection or security headers.

There’s an even larger-scale study. The Escape.tech research team scanned over 5,600 public vibe-coded apps and found over 2,000 vulnerabilities, more than 400 exposed secret keys, and 175 cases of exposed personal data (including medical records, IBANs, and phone numbers). One app built on the Lovable platform had 18,000 users’ data exposed.

According to Kaspersky research, 45% of code generated by AI models contains OWASP Top-10 vulnerabilities, and this rate hasn’t improved in two years. Iterating code fixes 5 times with GPT-4o actually increased critical vulnerabilities by 37%.

The invisible debt: Cognitive Debt

Security vulnerabilities can at least be caught by scanners. What’s scarier is "Cognitive Debt." If technical debt lives in the code, cognitive debt is debt that lives in the developer’s head.

When AI writes code, it’s not just typing that developers skip. They skip the deep thinking about "why this architecture was chosen" and "how this component interacts with that one." Over time, they can’t even modify systems they built themselves.

The numbers make it clearer. AI agents generate 140–200 meaningful lines of code per minute, while humans read and comprehend at 20–40 lines per minute. A 5–7x comprehension gap is widening. According to Anthropic research, skill proficiency of developers using AI assistance dropped 17%, and developers who delegated code generation to AI scored below 40% on comprehension tests, while those who used AI for concept exploration scored above 65%.

67% of developers say that despite productivity gains, they’re spending more time debugging AI-generated code. Cognitive debt typically erupts 6–12 months later, at which point the entire team no longer understands the system.

The Essentials: How to Get Started

You can’t avoid vibe coding entirely. But the key is not mistaking "it works" for "it’s safe."

- Make security scanning the default for AI-generated code

Integrate SAST (Static Analysis) tools into your CI/CD pipeline. AI-generated code shows vulnerability patterns similar to junior developer code. Automated scanning should come before human review. - Specify security requirements in your prompts

Instead of "build me a login page," say "build a login page that’s safe by OWASP Top-10 standards. Include input validation, bcrypt hashing, and CSRF tokens." The more specific the prompt, the fewer the vulnerabilities. - Make time to actually read AI code

If you skip review just because generation is 5–7x faster, cognitive debt piles up. At least one team member should be able to explain "why this architecture." Use AI as a "thinking partner," not a "typing replacement." - After 5+ iteration attempts, refactor manually

Having AI keep fixing things increases vulnerabilities by 37% each time. If 3–5 attempts don’t solve it, reading the code yourself and redesigning the structure is actually faster.