You've asked ChatGPT something and just went "oh, okay" — right? Travel plans, email drafts, even business strategy. The answer comes out smooth, so it must be right. But what if it's wrong? Wharton researchers tested 1,300 people and found that when AI gave wrong answers, 80% accepted them without question. No verification.

What is this?

This is a study published in February 2026 by cognitive behavioral scientists Steven D. Shaw and Gideon Nave at the University of Pennsylvania's Wharton School. The paper's title says it all: Thinking — Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender.

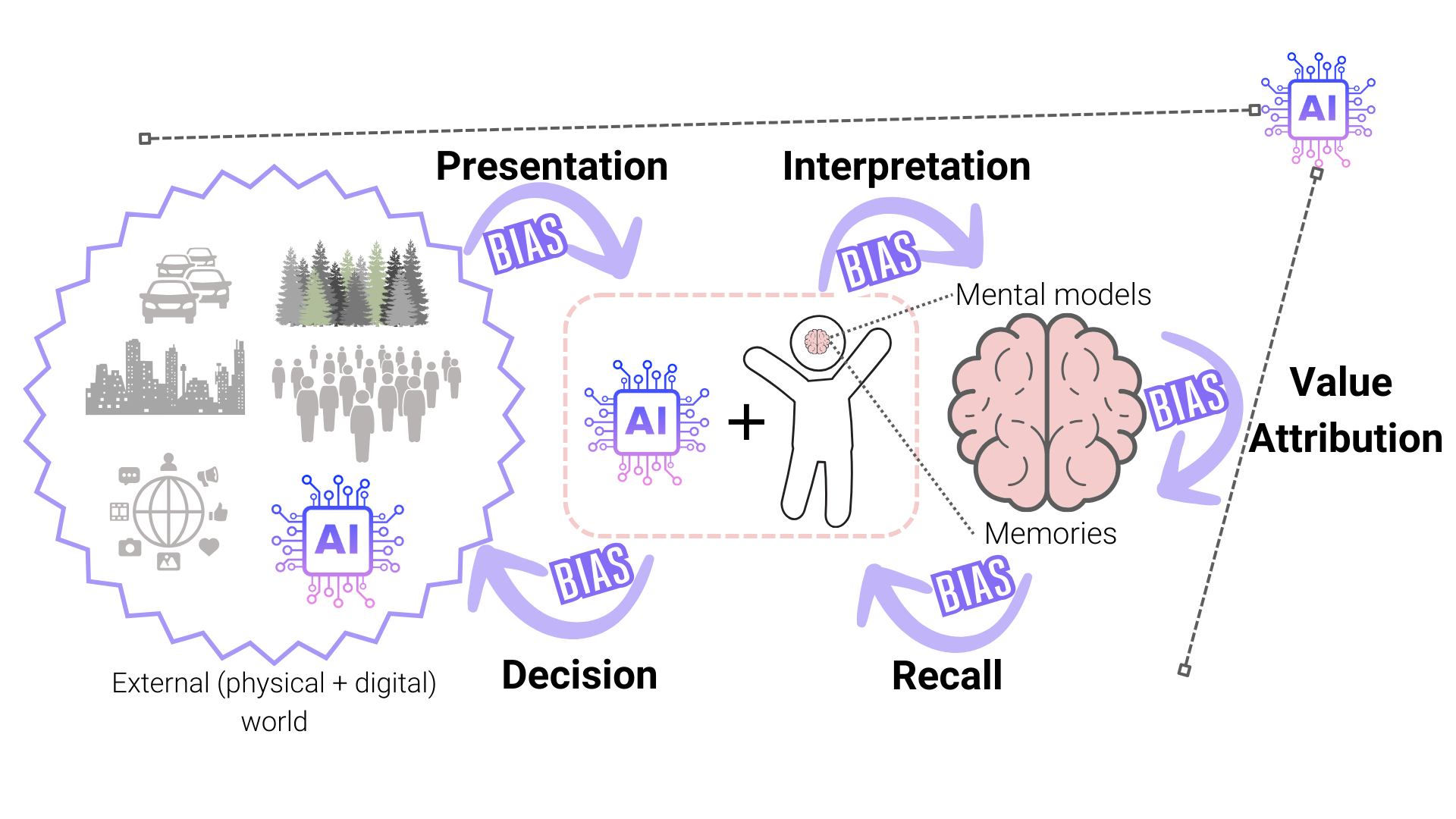

You know Daniel Kahneman's Thinking, Fast and Slow? The fast, intuitive System 1 and the slow, analytical System 2. Shaw and Nave propose adding a third system — AI as an external cognitive engine, 'System 3' — in what they call 'Tri-System Theory.'

The core argument: people aren't using AI as a 'tool.' They're outsourcing their entire thinking process to AI. The researchers call this phenomenon 'Cognitive Surrender.'

How is this different from existing 'AI distrust' research?

Previous research (like the Quinnipiac Poll) focused on people's attitudes — '76% say they don't trust AI.' This Wharton study is the opposite: people's behavior shows they trust AI almost unconditionally. They say 'I don't trust AI' but when AI hands them an answer, they skip verification entirely. What people say and what they do are two different things.

What's actually changing?

These numbers come from 1,372 participants across 9,500+ individual experiments.

The researchers call this 'adoption without verification.' Participants asked ChatGPT questions and when answers came back, they skipped the entire process of evaluating whether the answer was correct.

What's scarier is the confidence effect. When AI gave wrong answers and people followed them, their subjective confidence actually went up. 'AI confirmed it, so I must be right.' They didn't even know they were wrong.

| Calibrated Trust | Overtrust / Cognitive Surrender | |

|---|---|---|

| How AI is used | One reference among many | Accepted as the final answer |

| Verification behavior | Source checking, cross-validation | Verification skipped (adoption without verification) |

| Response to wrong answers | Doubt → re-check | Accept as-is (80%) |

| Confidence shift | Adjusts based on answer quality | Rises just from using AI |

| Thinking process | System 2 engaged (analytical thinking) | Delegated to System 3 (cognitive surrender) |

| Long-term impact | Judgment preserved | Risk of judgment atrophy |

Professor Shaw puts it sharply:

"People are handing their thinking over to AI and letting it think for them. They're bypassing the brain's entire internal reasoning process."

— Steven D. Shaw, Wharton School

Why does this happen? The researchers point to the 'fluency heuristic.' AI-generated text is grammatically flawless, well-structured, and written in a confident tone. Our brains automatically interpret 'smooth information' as 'accurate information.' That's why AI hallucinations can feel more convincing than something a human wrote.

One more finding: people who engage in less analytical thinking and people who trust technology more showed more severe cognitive surrender. This means personality traits can be vulnerability factors for AI overtrust.

How to use AI without surrendering

The researchers aren't saying don't use AI. When AI is correct, accuracy jumps by 25 points — that's huge. The point is: use it, but don't surrender.

- The 'really?' 3-second rule

When you get an AI answer, pause for 3 seconds before accepting it and ask: 'Is this actually right?' This tiny habit activates System 2. The study found that performance incentives and real-time feedback reduced cognitive surrender — same principle. - Demand sources from AI

Add 'cite your sources' to your prompts. If AI can't provide sources or gives fake ones, that's your signal to doubt the answer itself. Pair with tools like Perplexity that auto-cite sources for easier cross-checking. - Think first, then ask AI

Frame it as: 'Here's what I think — what am I missing?' When you organize your own thoughts first and then ask AI to review, System 2 is already engaged, which reduces blind acceptance. - Cross-verify high-stakes decisions

For hiring, investment, or strategy decisions that are hard to reverse, don't rely on one AI. Ask the same question to a different AI model, or get a human expert's opinion. If answers diverge, dig in yourself. - Create an 'AI red team' role in your team

When someone says 'AI said this' in a meeting, one person should always push back: 'How did you verify that AI answer?' This single question prevents cognitive surrender at the organizational level.

Why do 'distrust' and 'overtrust' coexist?

76% said 'I don't trust AI' in the Quinnipiac survey, yet 80% followed AI's wrong answers in the Wharton experiment. Seems contradictory, but they're measuring different things. Surveys measure abstract attitudes ('how much do you trust AI as a technology?'), while experiments measure concrete behavior ('what do you do when an answer is right in front of you?'). People distrust AI 'in principle' but skip verification when a convenient answer appears. That's what makes cognitive surrender truly dangerous.