Competitive analysis that used to take 3–4 hours: done in 12 minutes. A $200/month technical audit tool replaced by a single prompt. The era of paying an SEO agency $5,000–$10,000 a month? It's starting to look very finite.

What Is It?

It's a way to use Claude Code as your SEO data command center. The concept is simple — export your Google Search Console, GA4, and Google Ads data as JSON files, drop them into a project folder, and Claude Code can read all those sources at once and run cross-source analysis on them.

Will Scott, a digital marketing agency owner who writes for Search Engine Land, is the person who first systematized this workflow. His take: "I'm not a developer. I run an agency. But Claude Code has become the fastest way I know to work with data."

Here's the thing — tools like Semrush and Ahrefs are great at showing you data, but combining multiple sources to reach a conclusion has always meant a human spending hours in a spreadsheet running VLOOKUPs. Claude Code replaces that manual work with a single plain-language question.

Separately, Ruben Dominguez stress-tested SEO workflows in Claude's desktop app and put together 15 prompts across 6 categories — competitive analysis, keyword strategy, technical audits, content optimization, local SEO, and backlink reporting. His verdict? "Results equivalent to what a mid-tier SEO agency charges $5K–$10K a month to deliver."

What Changes?

The biggest bottleneck in traditional SEO work is tab switching. Pull keyword data from GSC, pull traffic data from GA4, pull search term reports from Google Ads, combine everything in a spreadsheet with VLOOKUP… the data exists, but merging it eats half your day.

Once you've set up Claude Code, you can just ask questions like this in plain English:

"Compare my GSC query data against my Google Ads search terms. Find keywords where I'm already ranking well organically but still spending ad budget — and flag any keywords where I'm running ads but have zero organic presence."

— Will Scott, Search Engine Land

Here's what that analysis surfaced for one higher-education client:

| Finding | Count | What it means |

|---|---|---|

| Wasted ad spend keywords | 2,742 | Getting impressions but zero clicks |

| Ad spend reduction opportunities | 351 | Ranking organically in positions 1–5 but still running ads |

| Paid amplification candidates | 33 | Strong organic presence but no ads running |

| Content gaps | 41 | Ads only — no organic content exists at all |

How long did that analysis take? About 90 seconds. Doing the same thing manually takes half an afternoon.

| Traditional SEO Workflow | Claude Code SEO | |

|---|---|---|

| Data integration | Download CSVs → VLOOKUP in spreadsheet | JSON files → plain-language questions |

| Cross-source analysis | Manual comparison by platform (half a day) | 90 seconds (paid-organic gap, etc.) |

| Dashboards | Build and maintain Looker Studio reports | No dashboard needed — just ask |

| Follow-up questions | Start the analysis over from scratch | Just ask a follow-up, instantly |

| Monthly refresh | 2–3 hours per client | ~20 minutes per client |

| AI search visibility | Requires a separate tool | Analyze with SERP API data in one place |

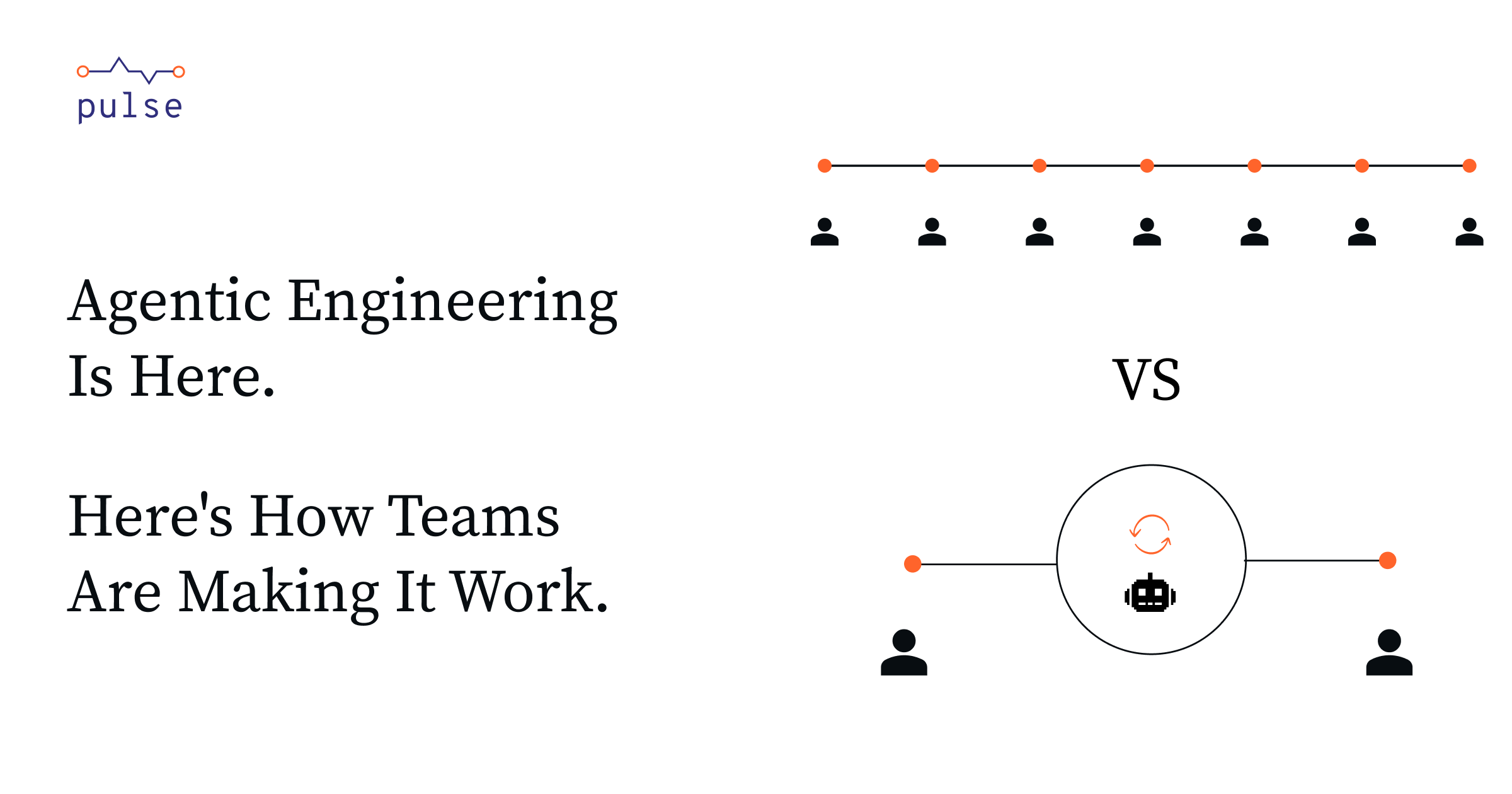

The impact is especially dramatic for agencies managing multiple clients. Cut analysis time from 2–3 hours down to 20 minutes per client, and the same team can handle 3–4× as many clients without adding headcount.

Key Takeaway

Technical audits can be automated too. Synscribe documented how to use Claude Code to automate seven technical SEO tasks: schema markup generation, hreflang tag validation, robots.txt creation, htaccess redirect rules, and regex filters for GSC. Some teams have cut 15+ hours of manual work per week using this approach.

Getting Started

- Create a Google Cloud service account

Set up a project in Google Cloud Console and enable both the Search Console API and the GA4 Data API. Create a service account, download its JSON key file, then add that service account email as a Viewer in your GSC and GA4 properties. That's all the auth you need. - Set up your data fetchers

Just tell Claude Code: "Write me a Python script that pulls the top 1,000 queries from GSC for the last 90 days." It'll write the script for you — no need to read any API docs. Do the same for GA4 and Google Ads. - Write a client config file

Create a JSON file with your domain, GSC property URL, GA4 property ID, Ads customer ID, industry, and competitors. This one file becomes Claude Code's context for every analysis it runs. - Fetch your data, then start asking questions

Runpython3 run_fetch.py --sources gsc,ga4,adsto collect everything, then open Claude Code and ask something like "run a paid-organic gap analysis." From initial setup to first results: about 35 minutes. - Add an AI search visibility layer (optional)

Start with Bing Webmaster Tools (free — the only first-party AI citation data available), then add DataForSEO's AI Overview API (~$0.01/query) or SerpApi ($75+/month). Cross-referencing AI citation data against GSC data lets you find pages that rank well organically but are getting skipped by AI search results.

Heads Up

Claude Code won't replace strategic judgment — understanding a client's business context, navigating competitive dynamics, and making prioritization calls are still very much human work. Also, LLMs occasionally produce confident-sounding numbers that are just wrong. Before anything goes to a client, cross-check the output against the original JSON files. Practitioners consistently give the same advice: treat it like reviewing a junior analyst's work.