Claude, Codex, Cursor — you use AI coding agents every day, so why do some people work 10x faster while others are still at the copy-paste level? Looking at @systematicls's publicly shared "How to become a world-class agentic engineer," the key isn't plugins or tools — it's how you work.

What Is It?

"Agentic Engineering" is the next step beyond "Vibe Coding," coined by Andrej Karpathy in 2025. If vibe coding was "just tell it and it'll figure it out," agentic engineering is a systematic engineering methodology for designing the process where AI agents plan, write code, test, and deploy.

Peter Steinberger (who joined OpenAI) went as far as saying on a podcast: "Vibe coding is almost a slur at this point. I call what I do agentic engineering." Karpathy himself emphasized this distinction. "Agentic" because you're delegating work to agents, "engineering" because it requires design and expertise.

So what does this actually look like in practice? Synthesizing @systematicls's original post with various real-world examples, the core principles distill into five.

The key distinction

Vibe coding = "Ask ChatGPT and copy-paste"

Agentic engineering = "Design systems where agents plan, execute, verify, and deploy on their own"

Both use AI, but the quality of output is completely different.

What's Different?

Principle 1. Context Engineering — Cut the noise, feed only the precise context

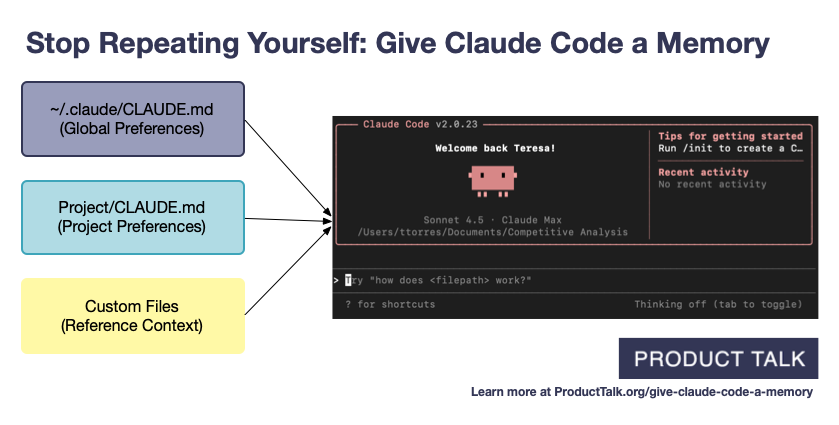

"The context window is 1 million tokens, so just dump everything in, right?" Easy to think that way, but Meta Staff Engineer John Kim nails it: "Models produce probabilistic output. You need to feed exactly the right amount for accurate results. More input doesn't mean better output." Tools like CLAUDE.md, slash commands, and MCP in Claude Code are ultimately tools for systematically managing context.

Even internally at OpenAI, they call this "Harness Engineering" and experimented with pushing domain knowledge directly into the codebase. Their conclusion was blunt: "If the domain knowledge isn't in the codebase, it doesn't exist to the agent."

Principle 2. Agentic Verification — Let the agent verify its own output

This is a key point emphasized by Boris Cherny, creator of Claude Code. If you tell the agent to write code, then manually review it, then give correction instructions... that's no different from vibe coding. Instead, you need to build loops where the agent verifies its own work. For backends, that means auto-running tests. For frontends, opening a browser with Playwright to capture and compare screenshots. For mobile, checking interactions via an ADB simulator.

PulseMCP calls this "closing the agentic loop." Once the loop is closed, a human invests 5 minutes to kick off the agent, and the agent runs autonomously for 30+ minutes, opening a PR with CI green, tests passing, and self-review complete.

| Vibe Coding (manual review) | Agentic Engineering (automated verification loop) | |

|---|---|---|

| Output verification | Developer manually reviews by eye | Agent self-verifies via tests, browser, and logs |

| Feedback speed | Waits until developer checks | Instant feedback, auto-corrects iteratively |

| Developer role | Reviews code line by line | Designs verification systems, reviews only the final PR |

| Agent runtime | One prompt → one result | 5 min investment → 30+ min autonomous execution |

| Quality consistency | Depends on developer's condition | Verification system applies consistent standards |

Principle 3. Agentic Tooling — Build tools that remove friction for agents

Peter Steinberger calls this "friction." Every point where an agent gets stuck or can't do something is an opportunity. A specific API isn't accessible via CLI? Build a CLI. A specific validation can't be automated? Build an MCP server. Steinberger credits the CLI tools he built in advance for the success of his OpenClaw project.

Simon Willison adds an important principle: "Hoard things you know how to do." Small code experiments, solved problems, prompts that worked well — accumulating these gives you a powerful reference when asking agents to build new features. In agentic engineering, a developer's real asset isn't "typing speed" but "the range of what they know is possible."

Principle 4. Agentic Codebase — Make your codebase AI-readable

Is your codebase optimized for AI agents? Probably not. Dead code, half-finished migrations with two competing patterns, conflicting frameworks... when these get into an agent's context, they act like poison. When an agent generates code with a weird pattern, it's not the agent's fault — it's the confusing signals in your codebase.

The OpenAI team went further. They standardized file structures so agents could generate code consistently, added agent-specific logging, and wrote documentation readable by both humans and agents. They're encoding their "Golden Principles" directly into the repo.

Principle 5. Compound Engineering — Let all four principles compound across the entire team

This is the "Compound Engineering" concept from Dan Schipper, co-founder of Every. Context design, verification loops, tools, codebase optimization — if only one person does these, only individual productivity improves. But when the entire team contributes knowledge to CLAUDE.md, builds new MCPs, and shares verification scripts, compound effects emerge. The next session's agent starts with more tools and context than the previous one.

These numbers come from organizations that systematically adopted agentic engineering — not individuals. Stripe's Minions system works like this: a developer posts a task in Slack, the agent writes the code, passes CI, and opens a PR. The human only does the final review and merge. From task assignment to PR — zero human involvement.

Quick Start Guide

- Rewrite your CLAUDE.md (or AGENTS.md) as an "agent onboarding doc"

Write down your project's build commands, coding conventions, and architecture decisions. Skip the obvious stuff like "we use TypeScript" and keep only what Claude is likely to get wrong. If domain knowledge isn't in the codebase, it doesn't exist to the agent. - Close one verification loop

For your most frequent task, make the agent verify its own results. For backend work, instruct "run the tests and iterate until they all pass." For frontend, use Playwright MCP to verify in the browser. Closing just one loop makes a noticeable difference. - Solve one friction point with a tool

Find the most common thing your agent can't do. Figure out if it's manually changing something on a website or calling a specific API, then build a CLI tool or MCP server to solve it. The test: "Next time this task comes up, can the agent handle it alone?" - Clean up dead code and competing patterns in your codebase

Half-finished migrations, two coexisting patterns, unused code — clean it up. Agents work probabilistically, so conflicting patterns mean they'll randomly follow either one. - Start sharing across the team & compound your gains

Commit new skills, MCP servers, and verification scripts to the repo. The test: "Can the tool I just built be used by the next person (or next agent session)?" Solo workflows don't compound.

Warning: 3 anti-patterns

These are things Simon Willison has clearly warned about.

1. Don't merge agent-written code without review. The agent's PR descriptions need human verification too.

2. Don't blindly trust agent-written tests. You can end up with "self-fulfilling tests" that pass with hardcoded values.

3. Don't settle for "it works, so it's fine." If you lower review standards just because code generation costs dropped, technical debt accumulates at AI speed.